Unreal Engine 5 | rendering differences to path tracer

Written by Julian Pössnicker on May 2022

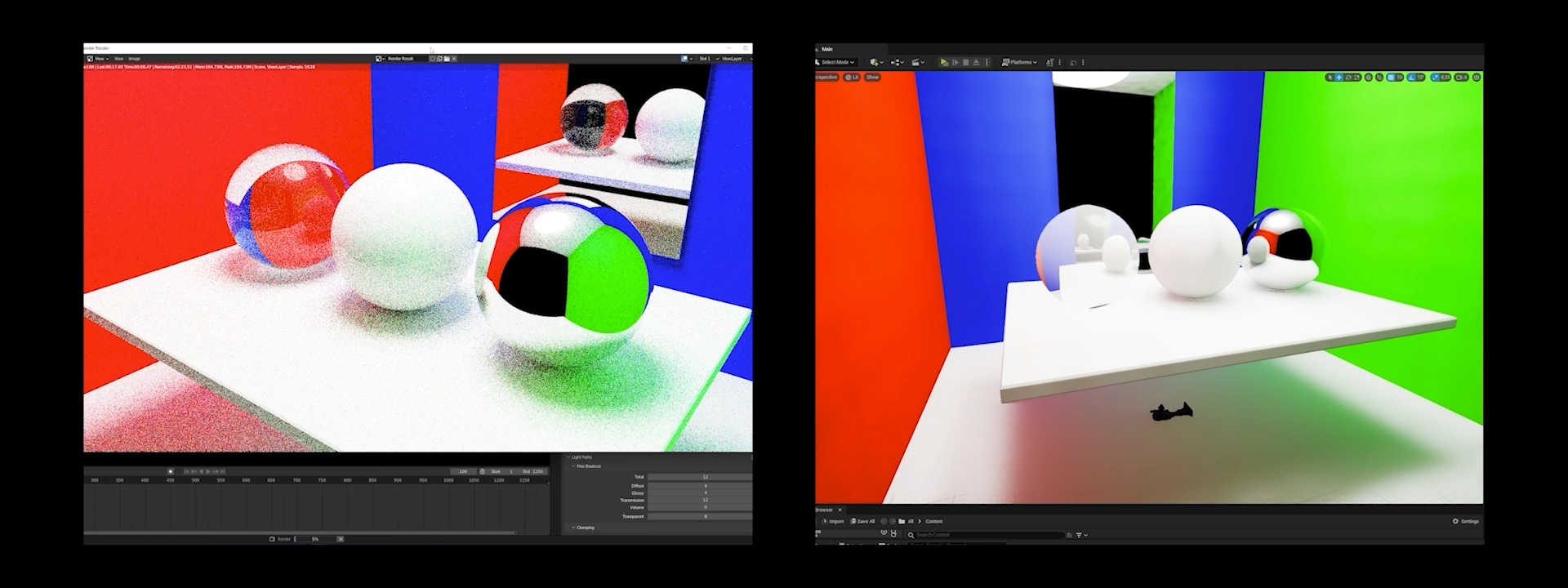

This article is about the rendering differences between game engines and ray tracers. As presented in my earlier articles nowadays game engines can create similar-looking imagery but with much less computing time needed. The downfalls and the resulting artifacts of game engine renderings are also minimal. But what sacrifices must be made to display similar graphical features in game engines, and what are the differences?

The following chapter will describe the rendering fundamentals of 3D graphics. The later chapters will focus on the differences between game engine rendering and path tracer rendering techniques.

Rendering Pipeline | 3D Geometry

In 3D graphics, the two most used methods to display and generate 3D objects are parametrical patches and polygons. Parametrical patches define the object with math functions. It is mostly used in CAD. This method is technically infinite in detail but it’s very hard to compute. The much more common approach for displaying objects in CG is with the use of polygons.

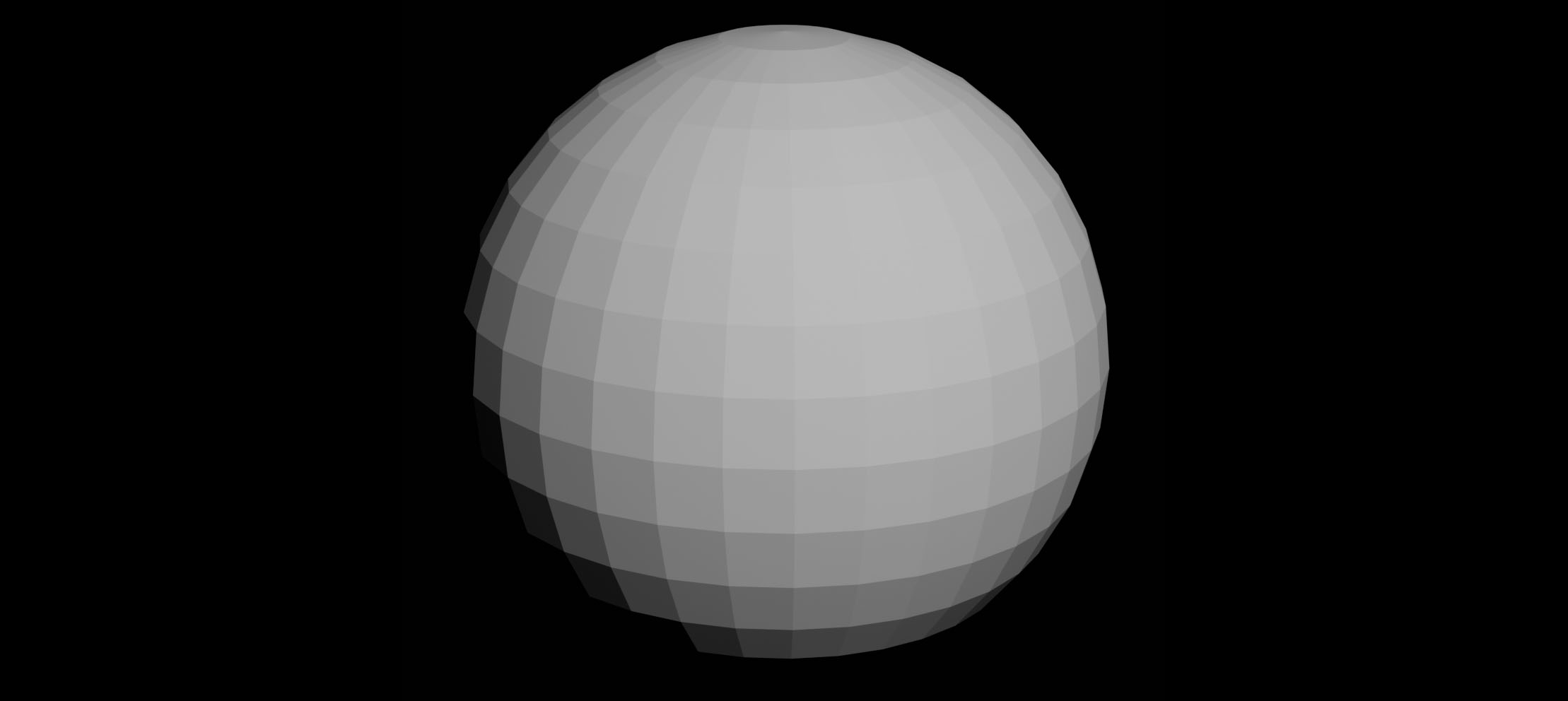

Polygons are plane little surfaces with at minimum three corner points, which are called vertices or vertex. To model an object out of polygons, many polygons are combined to imitate the geometry of the object. If the polygons are smaller and in high quantities, the detail of the geometry gets higher. One of the downsides is the increasing compute time for high poly count models.

Rendering Pipeline | Rasterization

In the next step, the objects will be rasterized. This process divides the camera view into pixels. Then the polygon parts are drawn which intersect with the pixel. Also, the polygons are ordered and deleted if not seen.

Shading | local vs global

The light and the material calculations are done in the shading process. There are two major shading techniques that differ in their accuracy and speed. The local shading is mostly used in 3D applications where a fast rendering speed is relevant such as games. The global shading techniques are much slower to compute but are much more accurate. The global shading is mostly used in the offline renderer and ray tracers such as Redshift, Arnold or Cycles-X.

Local Shading | Gouraud, and Phong

The local shading algorithms only calculate the interaction between a light source and the object. The light interactions and bounce lights between objects are not calculated.

One of the simplest local rendering algorithms is the polyhydral shading model. This shading model is very simple and cannot render smooth geometry. The polygons are mostly visible.

The more advanced shading methods are the Gouraud and Phong shading models. These two models interpolate the resulting reflections or the normal values of each vertex. The result is a much smoother appearance for round geometries.

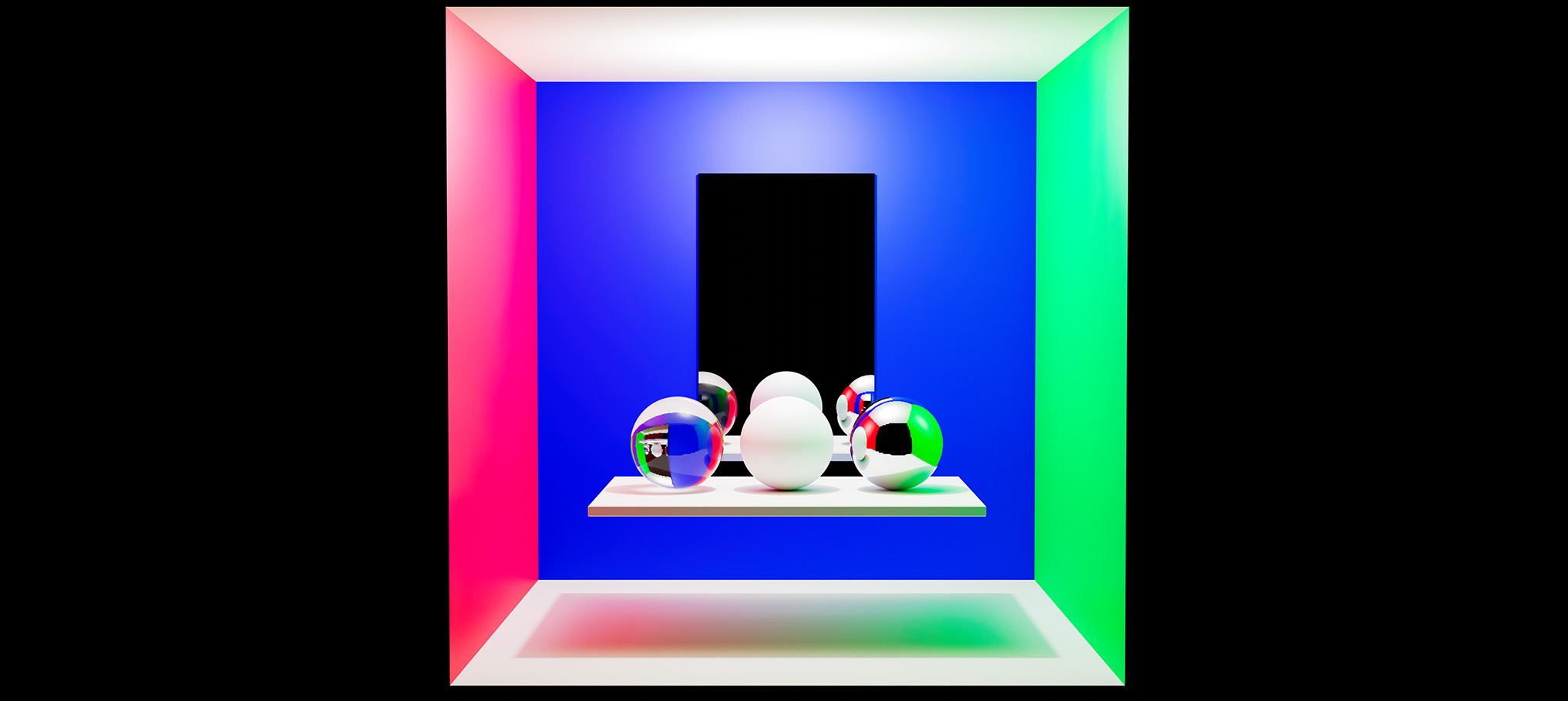

Global Shading | Path Tracer

The global shading methods calculate “all” interactions between all light sources and objects. That means the object`s color can “bleed” onto the next object. Or reflections are rendered accurately.

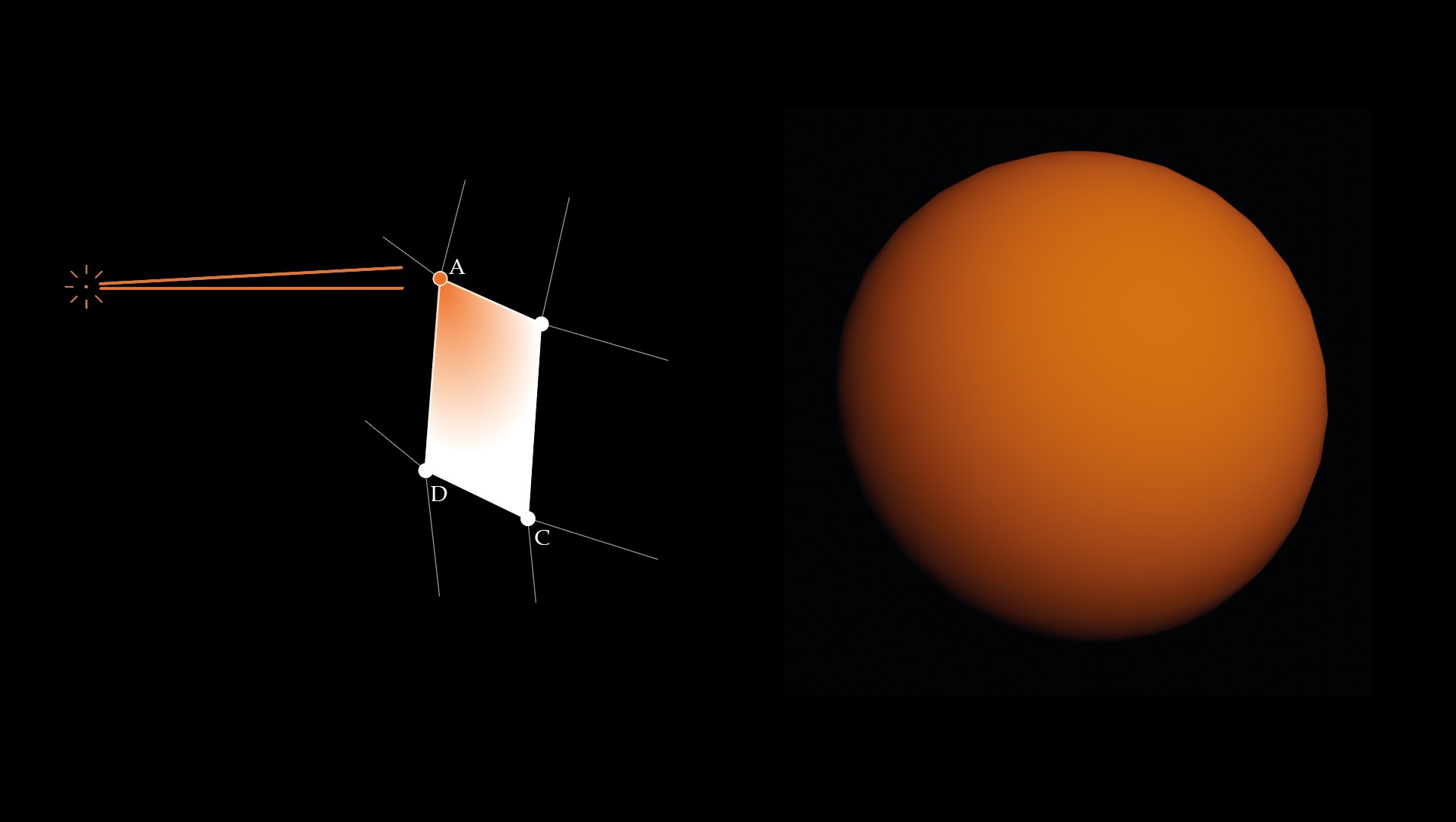

One of the most common global shading techniques is the Path Tracer. The Path Tracer shoots multiple rays per pixel at the scene, which then interact with the objects. One of the differences between the path tracer and other ray tracer is that one ray can only create one resulting ray out of the interaction with objects. The resulting rendered picture is at first noisy. But after more rays are calculated the image gets less noisy and clearer.

But calculating more rays means more rendering time. To hold the rendering time in check, the denoiser can denoise an image with low rays per pixel.

Game Engine | Lumen

Many of the new Game Engines incorporate some ray tracing features to simulate the light interaction such as reflections and indirect lighting. As shown in an article previously the lumen system of the Unreal Engine 5 can simulate such light interactions in Realtime.

3D Arnold CAD Cycles Cycles-X Game Engines Gouraud Monte Carlo parametric Path Tracer Phong Pipeline polygon Rasterization Redshift Render Shading vertex